The pace of change in AI is disorienting. Models turn over faster than planning cycles, the vendor landscape has sprawled into hundreds of tools all claiming to transform your commercial motion, and the rate of iteration is accelerating. This is landing at a particularly consequential moment for PE: the low-interest-rate era, when financial engineering could do most of the heavy lifting in value creation, is behind us. The firms generating the best returns today are doing it through operational improvement, and GTM has become a critical lever. Hiring at portcos is increasingly oriented around commercial capability, and the question of how to scale revenue without proportionally scaling headcount has moved to the center of every value creation conversation.

The result is a specific kind of anxiety we hear about constantly from GTM leaders and PE operators alike: an overwhelming desire to do something with AI, combined with genuine uncertainty about what that something is. Some functions have found clarity. For example, engineering and support both have trackable ROI and relatively contained error costs. GTM is different and we wrote about why in Three Laws of GTM:

"Generic outreach is just noise. You win by knowing something about your customers that competitors don't… Your only sustainable advantage is your ability to discover new advantages faster than competitors."

Iterative speed, then, is AI's real differentiator in GTM. Most PE portfolios have already extracted the easier efficiency gains of AI: chatbots, support deflection, coding assistants as cost-savings plays. But the real GTM upside is about agility. As Clay's cofounder Varun Anand alludes to in Hg's Orbit podcast, GTM alpha: the edge that lets you win deals competitors can't now erodes in two weeks rather than three months. The companies that win are no longer the ones who find one great campaign and scale it. They're the ones who can discover new advantages continuously, faster than competitors can copy them.

That adaptive philosophy is what we at Clay bring to our work with PE firms and their portfolios. We're working with Hg, a leading investor in European and transatlantic technology and services businesses with over $100 billion in assets under management. 62% of their portfolio companies are now in active pilots or implementation phase (read more here). What follows is the playbook we've developed from our work with Hg and a few other PE partners.

TL;DR

- AI-enabled GTM is now a primary value creation lever for PE: the biggest gains are in sales productivity and GTM automation, not just engineering or support cost reduction.

- Not all AI GTM tools are production-ready. Account research, enrichment, and rep-in-the-loop workflows deliver reliable ROI today. Fully autonomous outbound SDRs consistently underperform and damage domain reputation.

- Data quality is the highest-ROI starting point. Portcos that fix contact coverage and CRM hygiene first see faster, more durable downstream gains from every subsequent AI workflow.

- AI-enabled GTM compounds across a portfolio. PE firms that standardize GTM infrastructure across portcos can identify winning playbooks at one company and deploy them across ten others, faster than any single company can replicate alone.

How AI-Enabled GTM Is Reshaping the Rule of 40

PE-backed software companies live and die by the Rule of 40: a company's combined revenue growth rate and profit margin should exceed 40%. That benchmark is about to shift structurally, and the GTM function is one of the areas where the shift will be most pronounced.

Two cost lines are already compressing measurably. AI code assistants are delivering 20–45% productivity gains per developer, according to McKinsey's controlled studies of engineering spend. AI-powered support tools like Intercom Fin, Decagon, and Sierra are handling tier-one tickets and routine triage at scale. Real, capturable gains, and increasingly, a floor-level expectation among sophisticated buyers evaluating software companies.

The less-explored frontier is S&M. Sales and marketing runs at 20–50% of revenue in growth-stage B2B SaaS; it's routinely the single largest cost line in a portfolio company's P&L. The reason it's less explored isn't that the opportunity is smaller, but rather that GTM is harder to automate after all. But for operators who understand how to sequence the rollout, the math is compelling. Agentic workflows can compress BDR research, targeting, personalization, and execution time spent and in turn drives up the pipeline and revenue per rep.

The growth-side math is equally compelling, and it typically starts with something simpler than operators expect: data quality. When a portco increases contact coverage through filling gaps in enrichment, cleaning duplicates, and building a more complete org-level picture of the ICP, the downstream effect is real. For example, Verkada increased contact data coverage in their TAM from 50% to 80%. Reps reach the right people on the first contact, expansion workflows fire on complete records and automated targeting becomes reliable. That 30% data fix translates directly to pipeline and revenue. It's also usually the highest-ROI intervention available at the start of a transformation, because everything downstream depends on and benefits from it.

There's a second dimension worth highlighting here as portcos make decisions on GTM vendors. There are often 10 or more point solutions that make up a typical portco's GTM stack: everything from workflow tools to enrichment vendors to sequencing platforms. And they are all under competitive pressure from AI. Agentic coding tools now allow startups to replicate mid-market SaaS functionality in weeks, undercutting legacy pricing models and forcing enterprise buyers to ask whether they should build rather than buy. This makes the infrastructure-versus-tooling distinction more consequential than it might appear in a normal market. Point solutions built around automating a specific manual workflow face a different competitive dynamic than infrastructure built around orchestrating data and driving action across the commercial function. The former can be replicated, but the latter compounds with use. In other words, GTM vendor decisions at portcos aren't purely a procurement call, but a compounding infrastructure decision: one that will determine how fast the commercial function learns, adapts, and widens its lead over the next five years.

What Works, What Doesn't, and Why Most Vendor Pitches Get It Wrong

PE operating partners are sitting through a lot of vendor pitches right now. There are autonomous SDR companies, AI email platforms, and "agentic outbound" startups galore. A handful will generate real returns. The majority will burn through budget and erode organizational trust in AI as a category, the kind of damage that takes quarters to repair and makes every subsequent implementation harder to justify internally.

From our vantage point working closely with GTM teams and seeing how hundreds of mid market and enterprise companies structure their GTM workflows, the landscape breaks into three tiers.

Production-ready today: account research and enrichment, company scoring with transparent logic, and pre-meeting preparation that combines CRM history with third-party enrichment. These work for a specific reason: the correctness bar is relatively low, outputs are easy for a human to validate in seconds, and mistakes don't damage customer relationships. A company score reading '85: hiring RevOps, raised Series B, matches ICP on six dimensions' is useful at 80% accuracy, and it gets better as the data improves. For a 50-person sales team taking four meetings daily, automated research reclaims 50+ hours per week, which is the equivalent of a full-time research analyst.

Rep-in-the-loop workflows: personalized messaging, contact prioritization, and basic objection handling. The consistent pattern here is AI drafts, humans validate before anything reaches a prospect. A rep can review ten AI-generated emails in the time it takes to write two from scratch. But removing the human review step introduces error rates on three fronts: tone, relevance, and factual accuracy. All three damage response rates and brand perception in ways that compound over time. The economics still favor a human-reviewed AI workflow over a fully manual one, and in our experience, reps prefer it once they've used it for a week.

Not ready for production yet (this is where most of the damage happens): fully autonomous outbound SDRs, where AI handles TAM sourcing, targeting, messaging, sending, objection handling, and meeting booking without human involvement. The failure pattern is remarkably consistent. Week one it looks promising with a few meetings booked and enough to generate false confidence. Week two, open rates decline as the system trains spam filters against the company domain. Week three, messaging quality degrades with no learning loop but repetition at scale. By month one, it burned through target accounts, damaged domain reputation, and generated minimal pipelines.

One thing we hear consistently from operators who've been through successful implementations is that the technology is the easy part; the harder challenge is change management which includes re-skilling GTM leadership and practitioners to think differently about their work in an AI-native environment. Long-entrenched workflows, quarterly campaign planning, and siloed team structures don't change just because a new tool gets deployed. Leaders need to invest in helping their people develop new mental models alongside the new infrastructure.

We have seen repeatedly that starting with narrow, high-accuracy use cases like account research, intent signal tracking, pre-meeting briefs, inbound qualification and routing and expanding from there consistently outperforms broad deployments that spread across too many tasks at once.

One caveat to this framing: these tiers shift as the technology matures. What's unreliable today may be production-ready in a matter of months to years. The evaluation framework stays constant regardless: assess accuracy in context, measure the cost of errors, decide whether human oversight is worth the throughput tradeoff, and revisit the tier placement quarterly.

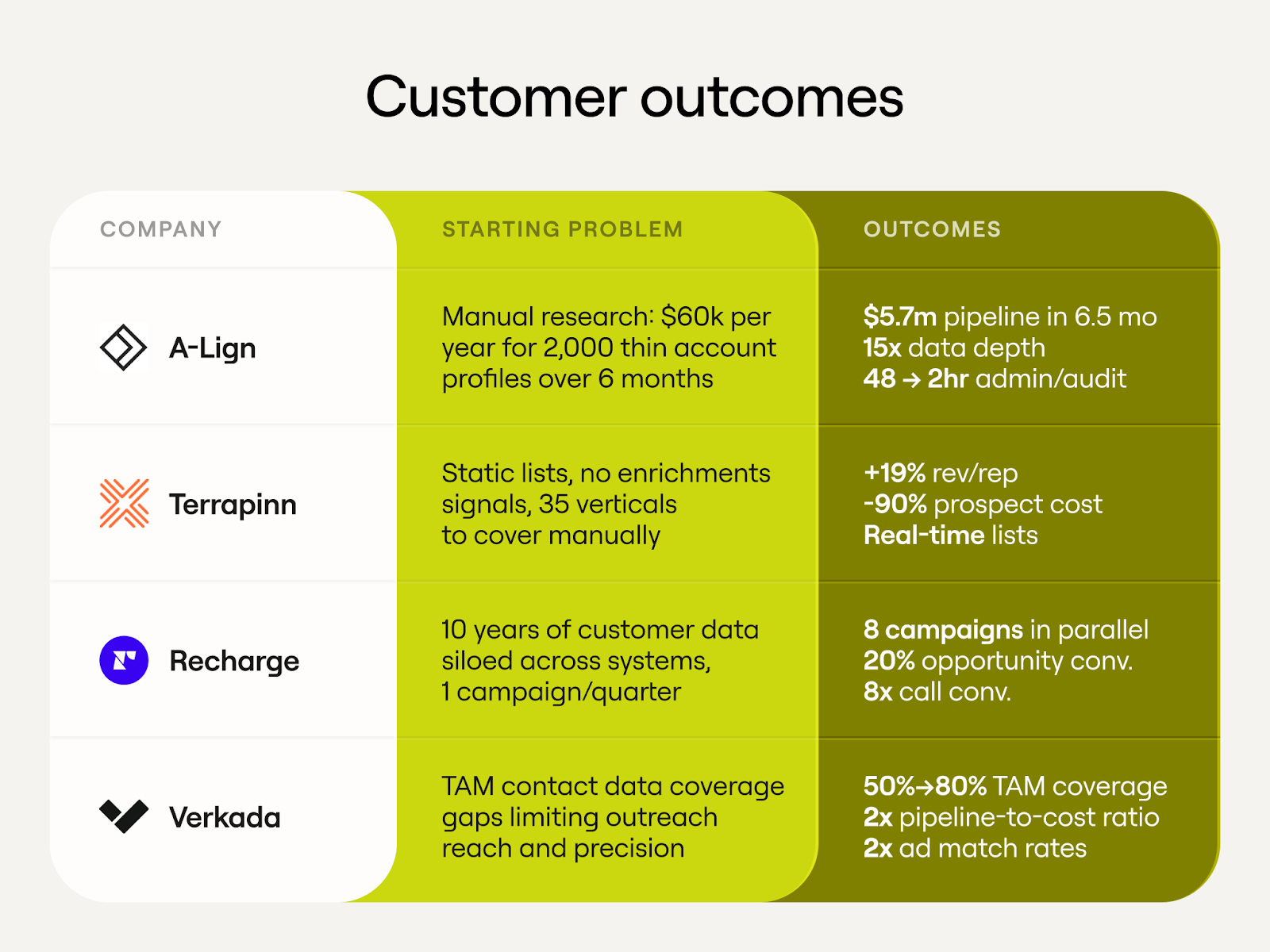

AI-Enabled GTM in Action: Portfolio Company Results

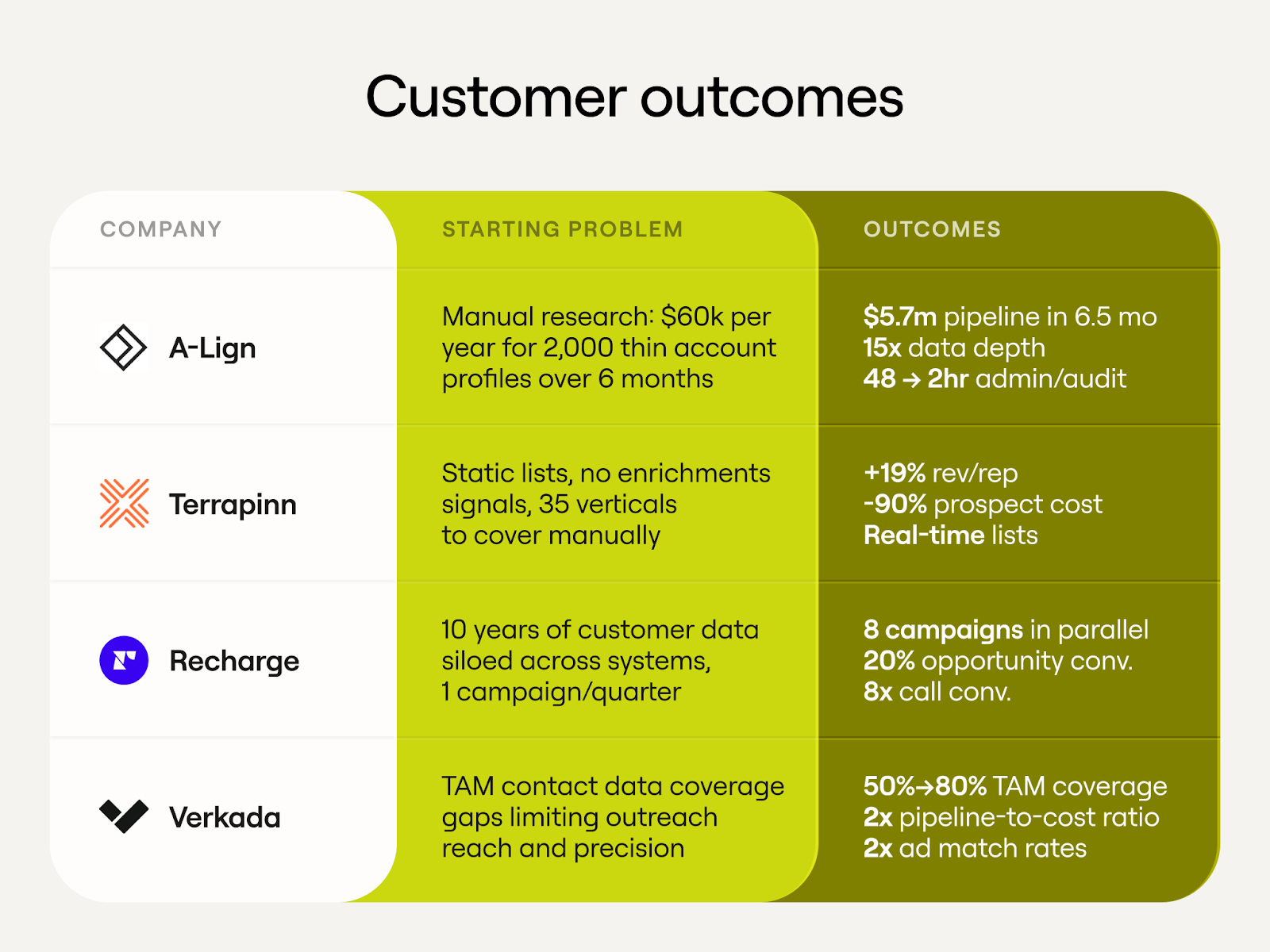

A-LIGN (Hg portfolio company) is a cybersecurity compliance firm serving 5,000+ organizations globally. The starting point was familiar to anyone who's audited a portco's commercial function. A-LIGN was paying $60K annually for a contractor to manually research 2,000 accounts over six months. The output was a spreadsheet with basic yes/no answers: Does this company do SOC 2 audits? Yes. Does it have a compliance program? Yes. Accurate, but thin, and not particularly actionable.

Their ops leader identified the core problem clearly: the data told reps that a company does SOC 2 audits, but what reps actually needed to know was who does their SOC 2 and why. Which competitor holds the relationship? What's the contract cycle? What compliance framework gaps exist? The data wasn't actionable. It was a filing cabinet with 2,000 folders and no context inside any of them.

Building automated research workflows on Clay, A-LIGN replaced six months of manual work with one month of automated execution. 30,000 basic data points became 450,000 detailed insights, a 15x increase in data depth at a cost of $50K for the platform, down from $60K for the manual contractor. At the same time, Salesforce admin work dropped from 48 hours to 2 hours per cycle.

Now, instead of telling reps "this company does compliance," the system showed which specific services they used, why they needed them, which competitors held the relationship, and where the switching triggers were. That intelligence powered a competitive displacement workflow that hadn't existed before without the data layer underneath it. This resulted in $5.7M in qualified pipeline from competitive displacement research alone and $3.3M in closed revenue directly attributed to displacement intelligence.

The cultural shift that followed may be the most durable outcome. Non-technical reps started defaulting to "can we automate this?" when they hit a manual process. A-LIGN now treats Clay as core revenue infrastructure, a difference between a software purchase and an operating and cultural shift.

Terrapinn, a global B2B events company with 150 sales reps covering 35 industry verticals, had a different bottleneck: their third-party data provider delivered static lists with no enrichment, no signals, and no context about why a prospect belonged at a specific event. Clay replaced months of researcher work with enriched, signal-based prospect lists built in minutes, ICP-matched across all 35 verticals, updated in real time. Revenue per rep increased 19% while prospect acquisition cost dropped 90%. For a 150-person sales organization, that combination changes the unit economics of the commercial model materially.

Recharge, the subscription billing platform processing $30 billion annually for 20,000+ merchants, had the opposite problem. They weren't missing data but had ten years of customer and product data sitting in siloed systems. Clay unified and connected it to campaign execution. One campaign per quarter became eight simultaneous campaigns. Opportunity conversion improved 20%, meeting conversion improved 12%, and call conversion improved 8x. Instead of adding net new data points, Clay made the existing data actionable.

These aren't isolated wins. They're outputs of the same underlying architecture which is vertical-agnostic by design. A-LIGN's workflow runs on enriched account intelligence, competitor relationship mapping, and contract cycle signals. Terrapinn's runs on enriched firmographics and event-matched ICPs. Recharge's runs on unified product data connected to campaign execution. The surface details differ by industry but the underlying architecture stays the same. A workflow built for cybersecurity compliance can be reconfigured for healthcare SaaS, professional services, or fintech with the inputs swapped and the logic intact as the underlying pattern is always the same: source, enrich, score, trigger, and act. That's what makes GTM playbook building a compounding asset for PE operators specifically. Each implementation produces a documented template and makes the next deployment faster and cheaper.

The Diligence Red Flag Isn't the Metrics

The most important thing to understand about AI-enabled GTM is that it compounds. A company that builds clean data infrastructure, instruments its signals, and connects those signals to automated action gets more efficient and more intelligent over time. More campaigns generate more outcome data, better outcome data improves targeting and better targeting improves conversion. Higher conversion funds more experimentation. Each cycle tightens the loop. The advantage is exponential.

Companies without data and orchestration infrastructure can't access this loop. They run campaigns manually, gather data inconsistently, and make decisions on intuition or lagging reports. Each quarter, they're roughly as good at GTM as they were the quarter before. Meanwhile, the company with the right infrastructure layer is compounding. That gap is what we suspect sophisticated buyers are beginning to price into diligence.

"Clean, enriched CRM data is now table stakes across the portfolio, you can't run a modern GTM organization without it," said Jack Claydon, AI Value Creation at Hg. "What's exciting is what comes next: orchestration workflows that automate key commercial processes, driving efficiency gains whilst simultaneously improving sales performance. That's where the real value creation is happening."

The metrics that surface it aren't new, but their interpretation has changed. Historically, a declining win rate or rising CAC was a signal of execution problems: the wrong messaging, the wrong reps, or the wrong segments. Today those same signals increasingly indicate a structural deficit including the absence of feedback infrastructure that would have caught and corrected those problems automatically. The question a buyer is now asking isn't just "why are these numbers bad?" It's "does this company have the infrastructure to get better, or will we have to build it from scratch?"

In addition, the compounding doesn't just operate within a single company, but across a portfolio. Firms that build AI-enabled GTM infrastructure consistently across multiple portcos gain the ability to identify what "good" looks like in a given segment by observing what works at scale, and then replicate it systematically. Which outbound signals most reliably predict sub-90-day sales cycles in vertical SaaS? Which expansion triggers drive the highest NRR in the $5–20M ARR range? What GTM tactics work the best with the ever changing market landscape? These are questions you can only answer with data across many companies.

"Most PE firms talk about learning from their portfolio, we are actually doing it. Similar GTM infrastructure across nearly 60 companies means patterns surface fast. Which playbooks drive pipeline in vertical SaaS, which use cases transfer directly, which ones need adapting. The rebuild-from-scratch tax disappears. That's where the compounding starts," said Chris Ross, Head of Portfolio Growth at Hg.

This is where PE firms have a structural advantage that individual companies don't. When the moat is speed, the ability to identify a winning tactic at one portco and deploy it across ten others compounds the benefit in a way no single company can replicate alone.

Ten years ago, ERP infrastructure became a diligence discount. Companies with fragmented back-office systems saw valuations marked down as buyers priced in the remediation cost. We believe GTM infrastructure will be following the same pattern. What's different this time is that the penalty isn't just the remediation cost: it's the compounding value the company has already failed to accumulate. Think the campaigns not run, the signals not captured, and the feedback loops not closed. That's harder to price and recover. Companies with defensible GTM infrastructure are being underwritten with conviction; companies without it are facing a new kind of scrutiny that goes well beyond what shows up in a standard QoE.

"We've seen this process play out before with ERP," said Steven Wastie, GTM Operating Partner at H.I.G. Capital. "The companies that dragged their feet lost years of operating leverage they couldn't get back. GTM infrastructure will be following the same script as AI redefines workflows, systems and organizations."

Where the Space Is Going

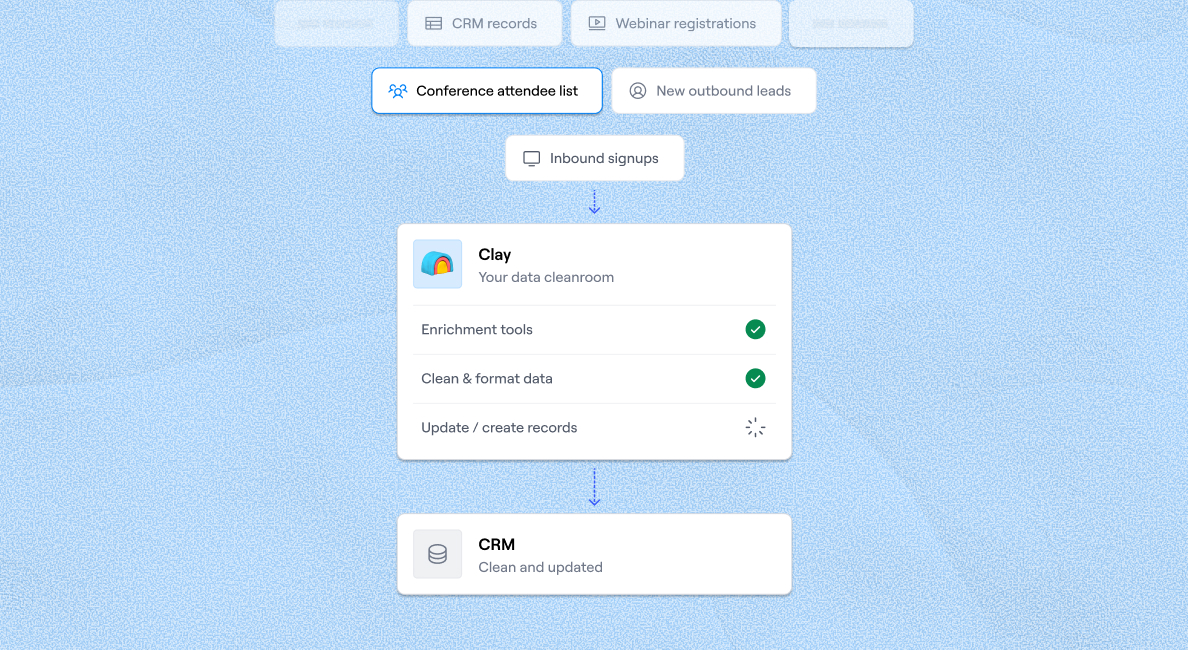

There's a deeper shift happening beneath the tactical question of which GTM workflows to automate, and it's worth naming directly when making infrastructure decisions for a portfolio company. The previous generation of GTM software was built primarily as a system of record. The CRM captured what happened, the MAP tracked engagement and the intent tool logged signals. Data flowed in, got cleaned, got organized, and sat there waiting for a human to act on it. The system's job was to remember.

What's emerging now is something categorically different: GTM infrastructure as a system of action and context. The gap between signal and response collapses. A company posts a new job description for a RevOps hire, triggers a workflow, enriches a contact record, scores the account against ICP, drafts a personalized outreach sequence, and routes it to the right rep all before a human has opened their laptop. The system interprets, decides, and acts.

That shift changes the infrastructure decision significantly. In a system-of-record world, the key questions were about data quality and CRM hygiene. In a system-of-action world, the key question is whether your infrastructure is built to orchestrate and whether it can connect signals to actions across tools, contexts, and workflows without requiring a human to mediate every step.

It also changes what "competitive moat" means in a commercial context. A lot of GTM over the past decade was built, implicitly, on friction and information asymmetry, that is on knowing things about prospects that competitors didn't or on buyer inertia making it easier to stay than to switch. AI is systematically dissolving both. Information that used to require six browser tabs and a research contractor is now a single prompt. Switching costs that relied on buyer laziness are being optimized away as agents evaluate alternatives on pure fit and price. The GTM approaches that hold their value in this environment aren't capitalizing on friction anymore. They're built around speed, context, and the ability to act on better signals faster than anyone else. That's the thread connecting the Three Laws of GTM to the infrastructure argument: the sustainable advantage was never the information itself, it was always the ability to find new advantages faster than competitors could copy the last ones.

One question PE operators are increasingly fielding from portco leadership: we're already getting pitched by OpenAI and Anthropic directly, do we need anything else? The answer matters for how you think about the infrastructure stack.

The model layer and the orchestration layer solve different problems. OpenAI and Anthropic provide the reasoning capability, e.g. the intelligence that can interpret a signal, draft a message, or summarize a call transcript. What they don't provide is the surrounding structure that makes that intelligence usable in a real business: the data pipelines that feed clean, enriched inputs into the model, the guardrails that keep outputs within defined parameters, the workflow logic that triggers the right action at the right time, and the handoff layer that writes results back into Salesforce, the data warehouse, or whatever system of record the portco runs on.

The orchestration layer matters especially for PE value creation teams, because what they need isn't open-ended AI experimentation. They need low-risk, repeatable playbooks they can deploy across a portfolio and defend in a board meeting. That means packaging proven GTM use cases, reducing the in-house build burden, and connecting model intelligence to the commercial systems portcos already use.

It also matters for the parts of the portfolio that don't look like SaaS. As more PE firms expand into healthcare, business services, and industrials, the GTM infrastructure problem becomes more difficult. Data is more fragmented, workflows are more complex and the sales motion often involves longer cycles, more stakeholders, and less digital exhaust to work from. These are exactly the conditions where the data and orchestration layer adds the most value because clean, well-formatted data does not exist yet and someone has to find the data and impose structure on fragmented data before a model can do anything useful with it. A healthcare services company with patient records in one system, referral relationships in another, and billing data in a third isn't going to get value from an AI by itself. It needs an enrichment and workflow layer that can unify those inputs and connect them to the commercial motion. That's a solvable problem, and the firms building this infrastructure in non-SaaS verticals now are accumulating a compounding advantage over competitors who are still waiting for their data to get clean on its own.

The firms that understand this distinction (infrastructure vs. tooling; system of action vs. system of record) are the ones building durable commercial advantages in their portfolios. Within 18–24 months, the practical expectation is that autonomous agents will be handling significant portions of account management, outbound sequencing, and expansion intelligence, while humans focus on relationship building and high judgment tasks. The companies that will be positioned to deploy those agents well are the ones building the data foundation and adopting the right infrastructure now.

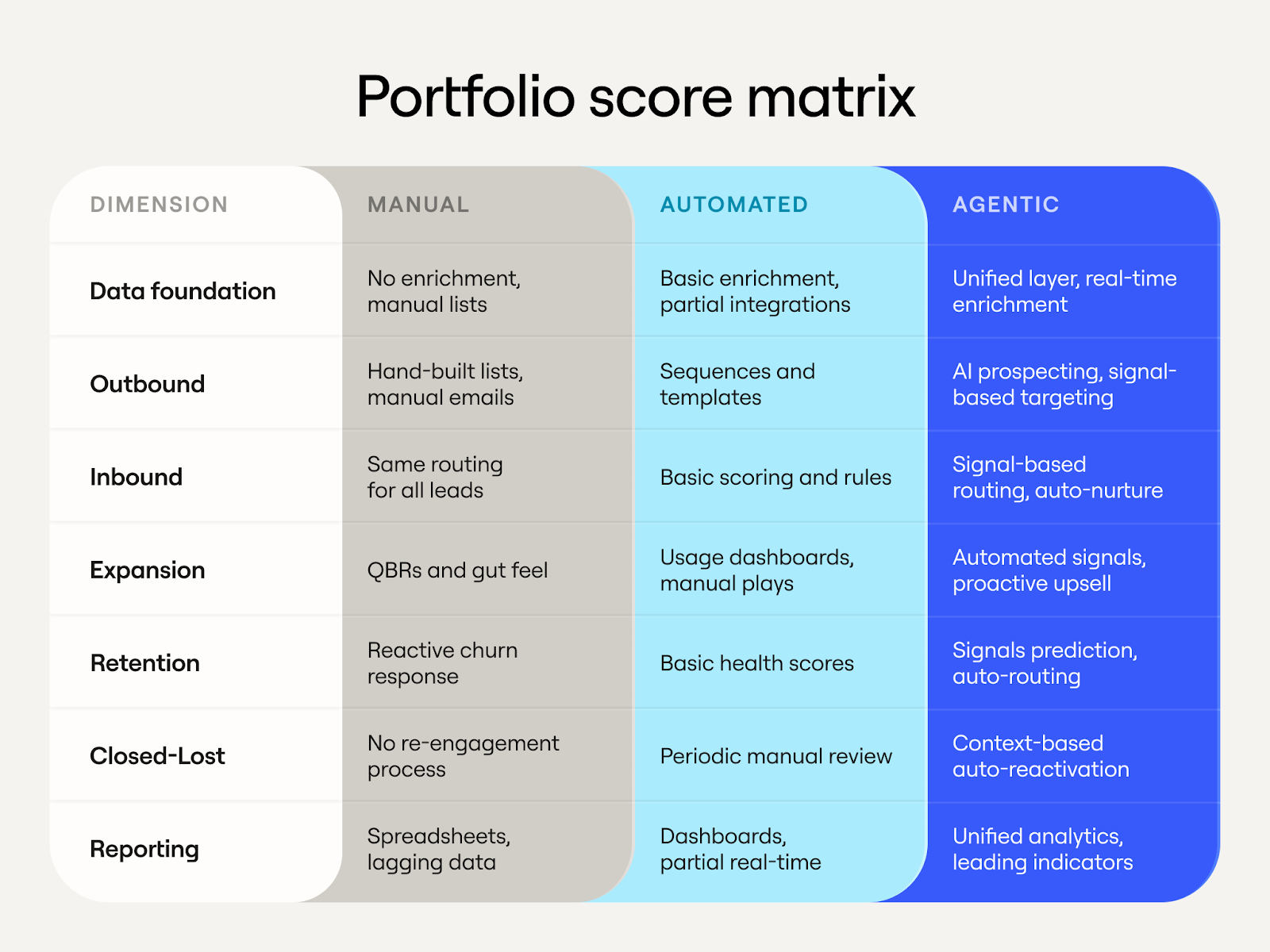

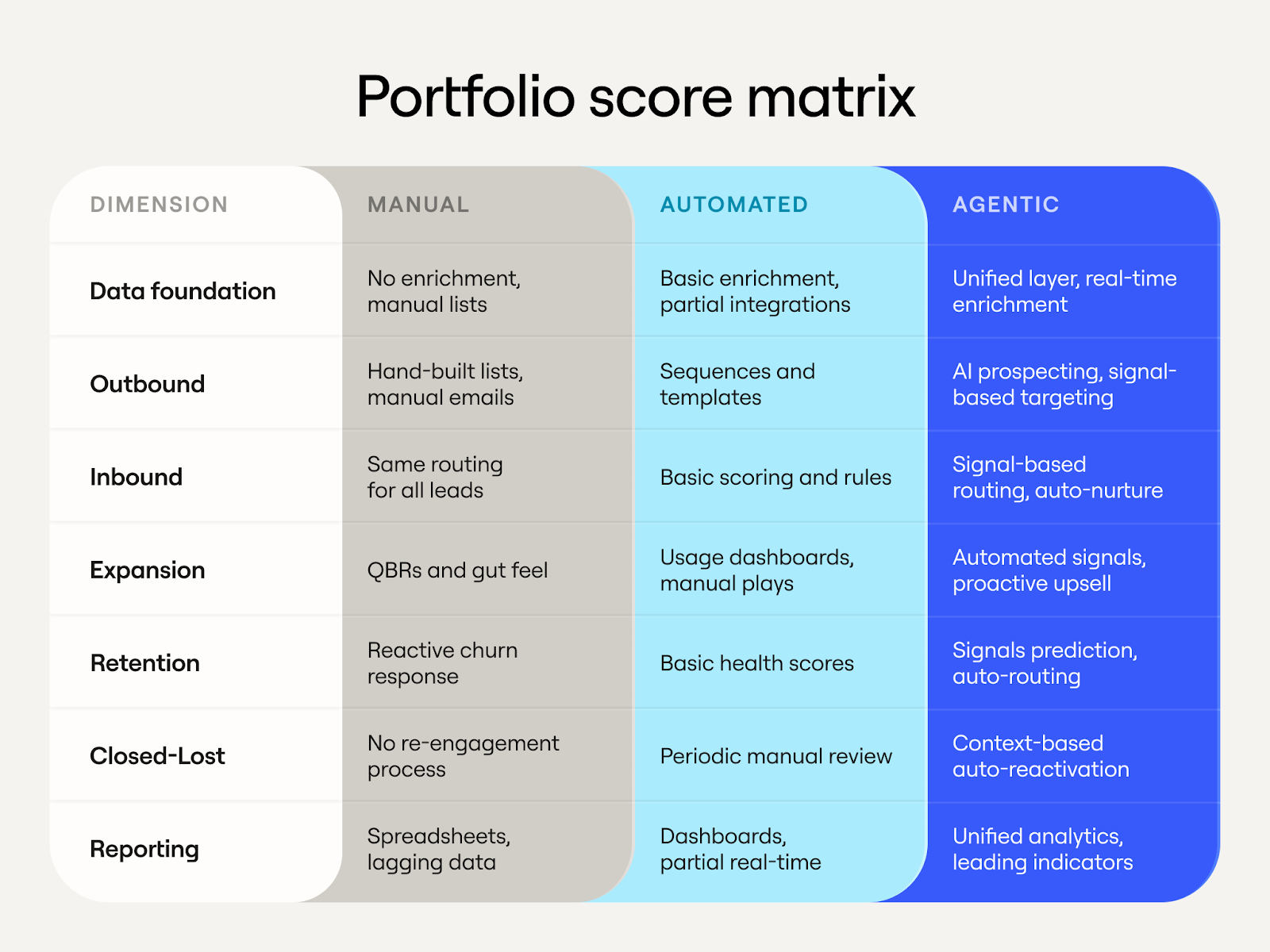

Score Your Portfolio

The diligence table tells you where the problems are. This rubric gives you a starting point for how far each portco has to go. Score each dimension on a 1–3 scale. It typically takes about 15 minutes per company and surfaces the intervention priorities quickly.

Weighting note: if Data Foundation scores a 1, start there regardless of what the other dimensions show. Nothing downstream works reliably without clean, enriched, unified data. It's the dependency everything else sits on, and it's also where the ROI on fixing it tends to show up fastest.

Scores of 7–10 indicate immediate action is needed. The foundation work has to come first: clean, deduplicate, enrich, and establish a single source of truth for TAM definition.

Scores of 11–16 mean the foundation exists and needs acceleration; the focus shifts to deploying AI workflows across core revenue motions.

Companies approaching 17–21 are ready to work on feedback loops and compounding advantages. That's the flywheel where more usage generates richer data generates better performance.

Frequently Asked Questions

What does AI-enabled GTM actually mean for a PE portfolio company?

AI-enabled GTM means connecting data, enrichment, signals, and automated workflows so that commercial teams can act on better information faster, without proportionally scaling headcount. For portcos, it typically starts with data quality and account research, then expands to automated targeting, personalized outreach, and signal-triggered workflows across the revenue motion.

Which AI GTM use cases are production-ready today?

Account research and enrichment, company scoring with transparent logic, pre-meeting preparation, inbound qualification and routing, and intent signal tracking are all production-ready. Rep-in-the-loop workflows for personalized messaging also deliver reliable results when humans review AI drafts before anything reaches a prospect. Fully autonomous outbound SDRs are not yet production-ready and consistently underperform in practice.

How do PE firms adapt their GTM models as AI offerings change?

The firms generating the best results start narrow, deploying high-accuracy use cases first and expanding from there. They invest in change management alongside new infrastructure, re-skilling GTM leadership to think differently about their work in an AI-native environment. They also treat GTM vendor decisions as compounding infrastructure choices, not one-off procurement calls, because the infrastructure layer determines how fast the commercial function learns and adapts over the next several years.

Why isn't it enough to just use OpenAI or Anthropic directly?

Foundation models provide reasoning capability but not the surrounding structure a real business needs: clean data pipelines, workflow logic, guardrails, and handoff layers that write results back into Salesforce or a data warehouse. PE value creation teams specifically need low-risk, repeatable playbooks they can deploy across a portfolio, and that requires an orchestration layer on top of the model layer.

How does AI-enabled GTM infrastructure affect diligence and valuation?

Sophisticated buyers are beginning to price GTM infrastructure into diligence the same way ERP infrastructure was priced in a decade ago. Companies without clean data and orchestration infrastructure face scrutiny that goes beyond standard QoE metrics. The penalty isn't just remediation cost; it's the compounding value the company has already failed to accumulate through campaigns not run, signals not captured, and feedback loops not closed.

Acknowledgments

This paper was shaped by the generous input of our partners and leading PE practitioners. Thank you to Erik Kristjanson (Vista Equity Partners), Steven Wastie (H.I.G. Capital), Jack Claydon (Hg), and Chris Ross (Hg) for their candid feedback, challenge, and perspective throughout the drafting process.

Also, a huge amount of thanks is due to the Clay team who put this together including Sonia Hernandez, Mary Hanley, Lindsay Workman, Karan Parekh, Sameer Ziaee, Ziqi Deng, and Ted Brown.

The pace of change in AI is disorienting. Models turn over faster than planning cycles, the vendor landscape has sprawled into hundreds of tools all claiming to transform your commercial motion, and the rate of iteration is accelerating. This is landing at a particularly consequential moment for PE: the low-interest-rate era, when financial engineering could do most of the heavy lifting in value creation, is behind us. The firms generating the best returns today are doing it through operational improvement, and GTM has become a critical lever. Hiring at portcos is increasingly oriented around commercial capability, and the question of how to scale revenue without proportionally scaling headcount has moved to the center of every value creation conversation.

The result is a specific kind of anxiety we hear about constantly from GTM leaders and PE operators alike: an overwhelming desire to do something with AI, combined with genuine uncertainty about what that something is. Some functions have found clarity. For example, engineering and support both have trackable ROI and relatively contained error costs. GTM is different and we wrote about why in Three Laws of GTM:

"Generic outreach is just noise. You win by knowing something about your customers that competitors don't… Your only sustainable advantage is your ability to discover new advantages faster than competitors."

Iterative speed, then, is AI's real differentiator in GTM. Most PE portfolios have already extracted the easier efficiency gains of AI: chatbots, support deflection, coding assistants as cost-savings plays. But the real GTM upside is about agility. As Clay's cofounder Varun Anand alludes to in Hg's Orbit podcast, GTM alpha: the edge that lets you win deals competitors can't now erodes in two weeks rather than three months. The companies that win are no longer the ones who find one great campaign and scale it. They're the ones who can discover new advantages continuously, faster than competitors can copy them.

That adaptive philosophy is what we at Clay bring to our work with PE firms and their portfolios. We're working with Hg, a leading investor in European and transatlantic technology and services businesses with over $100 billion in assets under management. 62% of their portfolio companies are now in active pilots or implementation phase (read more here). What follows is the playbook we've developed from our work with Hg and a few other PE partners.

TL;DR

- AI-enabled GTM is now a primary value creation lever for PE: the biggest gains are in sales productivity and GTM automation, not just engineering or support cost reduction.

- Not all AI GTM tools are production-ready. Account research, enrichment, and rep-in-the-loop workflows deliver reliable ROI today. Fully autonomous outbound SDRs consistently underperform and damage domain reputation.

- Data quality is the highest-ROI starting point. Portcos that fix contact coverage and CRM hygiene first see faster, more durable downstream gains from every subsequent AI workflow.

- AI-enabled GTM compounds across a portfolio. PE firms that standardize GTM infrastructure across portcos can identify winning playbooks at one company and deploy them across ten others, faster than any single company can replicate alone.

How AI-Enabled GTM Is Reshaping the Rule of 40

PE-backed software companies live and die by the Rule of 40: a company's combined revenue growth rate and profit margin should exceed 40%. That benchmark is about to shift structurally, and the GTM function is one of the areas where the shift will be most pronounced.

Two cost lines are already compressing measurably. AI code assistants are delivering 20–45% productivity gains per developer, according to McKinsey's controlled studies of engineering spend. AI-powered support tools like Intercom Fin, Decagon, and Sierra are handling tier-one tickets and routine triage at scale. Real, capturable gains, and increasingly, a floor-level expectation among sophisticated buyers evaluating software companies.

The less-explored frontier is S&M. Sales and marketing runs at 20–50% of revenue in growth-stage B2B SaaS; it's routinely the single largest cost line in a portfolio company's P&L. The reason it's less explored isn't that the opportunity is smaller, but rather that GTM is harder to automate after all. But for operators who understand how to sequence the rollout, the math is compelling. Agentic workflows can compress BDR research, targeting, personalization, and execution time spent and in turn drives up the pipeline and revenue per rep.

The growth-side math is equally compelling, and it typically starts with something simpler than operators expect: data quality. When a portco increases contact coverage through filling gaps in enrichment, cleaning duplicates, and building a more complete org-level picture of the ICP, the downstream effect is real. For example, Verkada increased contact data coverage in their TAM from 50% to 80%. Reps reach the right people on the first contact, expansion workflows fire on complete records and automated targeting becomes reliable. That 30% data fix translates directly to pipeline and revenue. It's also usually the highest-ROI intervention available at the start of a transformation, because everything downstream depends on and benefits from it.

There's a second dimension worth highlighting here as portcos make decisions on GTM vendors. There are often 10 or more point solutions that make up a typical portco's GTM stack: everything from workflow tools to enrichment vendors to sequencing platforms. And they are all under competitive pressure from AI. Agentic coding tools now allow startups to replicate mid-market SaaS functionality in weeks, undercutting legacy pricing models and forcing enterprise buyers to ask whether they should build rather than buy. This makes the infrastructure-versus-tooling distinction more consequential than it might appear in a normal market. Point solutions built around automating a specific manual workflow face a different competitive dynamic than infrastructure built around orchestrating data and driving action across the commercial function. The former can be replicated, but the latter compounds with use. In other words, GTM vendor decisions at portcos aren't purely a procurement call, but a compounding infrastructure decision: one that will determine how fast the commercial function learns, adapts, and widens its lead over the next five years.

What Works, What Doesn't, and Why Most Vendor Pitches Get It Wrong

PE operating partners are sitting through a lot of vendor pitches right now. There are autonomous SDR companies, AI email platforms, and "agentic outbound" startups galore. A handful will generate real returns. The majority will burn through budget and erode organizational trust in AI as a category, the kind of damage that takes quarters to repair and makes every subsequent implementation harder to justify internally.

From our vantage point working closely with GTM teams and seeing how hundreds of mid market and enterprise companies structure their GTM workflows, the landscape breaks into three tiers.

Production-ready today: account research and enrichment, company scoring with transparent logic, and pre-meeting preparation that combines CRM history with third-party enrichment. These work for a specific reason: the correctness bar is relatively low, outputs are easy for a human to validate in seconds, and mistakes don't damage customer relationships. A company score reading '85: hiring RevOps, raised Series B, matches ICP on six dimensions' is useful at 80% accuracy, and it gets better as the data improves. For a 50-person sales team taking four meetings daily, automated research reclaims 50+ hours per week, which is the equivalent of a full-time research analyst.

Rep-in-the-loop workflows: personalized messaging, contact prioritization, and basic objection handling. The consistent pattern here is AI drafts, humans validate before anything reaches a prospect. A rep can review ten AI-generated emails in the time it takes to write two from scratch. But removing the human review step introduces error rates on three fronts: tone, relevance, and factual accuracy. All three damage response rates and brand perception in ways that compound over time. The economics still favor a human-reviewed AI workflow over a fully manual one, and in our experience, reps prefer it once they've used it for a week.

Not ready for production yet (this is where most of the damage happens): fully autonomous outbound SDRs, where AI handles TAM sourcing, targeting, messaging, sending, objection handling, and meeting booking without human involvement. The failure pattern is remarkably consistent. Week one it looks promising with a few meetings booked and enough to generate false confidence. Week two, open rates decline as the system trains spam filters against the company domain. Week three, messaging quality degrades with no learning loop but repetition at scale. By month one, it burned through target accounts, damaged domain reputation, and generated minimal pipelines.

One thing we hear consistently from operators who've been through successful implementations is that the technology is the easy part; the harder challenge is change management which includes re-skilling GTM leadership and practitioners to think differently about their work in an AI-native environment. Long-entrenched workflows, quarterly campaign planning, and siloed team structures don't change just because a new tool gets deployed. Leaders need to invest in helping their people develop new mental models alongside the new infrastructure.

We have seen repeatedly that starting with narrow, high-accuracy use cases like account research, intent signal tracking, pre-meeting briefs, inbound qualification and routing and expanding from there consistently outperforms broad deployments that spread across too many tasks at once.

One caveat to this framing: these tiers shift as the technology matures. What's unreliable today may be production-ready in a matter of months to years. The evaluation framework stays constant regardless: assess accuracy in context, measure the cost of errors, decide whether human oversight is worth the throughput tradeoff, and revisit the tier placement quarterly.

AI-Enabled GTM in Action: Portfolio Company Results

A-LIGN (Hg portfolio company) is a cybersecurity compliance firm serving 5,000+ organizations globally. The starting point was familiar to anyone who's audited a portco's commercial function. A-LIGN was paying $60K annually for a contractor to manually research 2,000 accounts over six months. The output was a spreadsheet with basic yes/no answers: Does this company do SOC 2 audits? Yes. Does it have a compliance program? Yes. Accurate, but thin, and not particularly actionable.

Their ops leader identified the core problem clearly: the data told reps that a company does SOC 2 audits, but what reps actually needed to know was who does their SOC 2 and why. Which competitor holds the relationship? What's the contract cycle? What compliance framework gaps exist? The data wasn't actionable. It was a filing cabinet with 2,000 folders and no context inside any of them.

Building automated research workflows on Clay, A-LIGN replaced six months of manual work with one month of automated execution. 30,000 basic data points became 450,000 detailed insights, a 15x increase in data depth at a cost of $50K for the platform, down from $60K for the manual contractor. At the same time, Salesforce admin work dropped from 48 hours to 2 hours per cycle.

Now, instead of telling reps "this company does compliance," the system showed which specific services they used, why they needed them, which competitors held the relationship, and where the switching triggers were. That intelligence powered a competitive displacement workflow that hadn't existed before without the data layer underneath it. This resulted in $5.7M in qualified pipeline from competitive displacement research alone and $3.3M in closed revenue directly attributed to displacement intelligence.

The cultural shift that followed may be the most durable outcome. Non-technical reps started defaulting to "can we automate this?" when they hit a manual process. A-LIGN now treats Clay as core revenue infrastructure, a difference between a software purchase and an operating and cultural shift.

Terrapinn, a global B2B events company with 150 sales reps covering 35 industry verticals, had a different bottleneck: their third-party data provider delivered static lists with no enrichment, no signals, and no context about why a prospect belonged at a specific event. Clay replaced months of researcher work with enriched, signal-based prospect lists built in minutes, ICP-matched across all 35 verticals, updated in real time. Revenue per rep increased 19% while prospect acquisition cost dropped 90%. For a 150-person sales organization, that combination changes the unit economics of the commercial model materially.

Recharge, the subscription billing platform processing $30 billion annually for 20,000+ merchants, had the opposite problem. They weren't missing data but had ten years of customer and product data sitting in siloed systems. Clay unified and connected it to campaign execution. One campaign per quarter became eight simultaneous campaigns. Opportunity conversion improved 20%, meeting conversion improved 12%, and call conversion improved 8x. Instead of adding net new data points, Clay made the existing data actionable.

These aren't isolated wins. They're outputs of the same underlying architecture which is vertical-agnostic by design. A-LIGN's workflow runs on enriched account intelligence, competitor relationship mapping, and contract cycle signals. Terrapinn's runs on enriched firmographics and event-matched ICPs. Recharge's runs on unified product data connected to campaign execution. The surface details differ by industry but the underlying architecture stays the same. A workflow built for cybersecurity compliance can be reconfigured for healthcare SaaS, professional services, or fintech with the inputs swapped and the logic intact as the underlying pattern is always the same: source, enrich, score, trigger, and act. That's what makes GTM playbook building a compounding asset for PE operators specifically. Each implementation produces a documented template and makes the next deployment faster and cheaper.

The Diligence Red Flag Isn't the Metrics

The most important thing to understand about AI-enabled GTM is that it compounds. A company that builds clean data infrastructure, instruments its signals, and connects those signals to automated action gets more efficient and more intelligent over time. More campaigns generate more outcome data, better outcome data improves targeting and better targeting improves conversion. Higher conversion funds more experimentation. Each cycle tightens the loop. The advantage is exponential.

Companies without data and orchestration infrastructure can't access this loop. They run campaigns manually, gather data inconsistently, and make decisions on intuition or lagging reports. Each quarter, they're roughly as good at GTM as they were the quarter before. Meanwhile, the company with the right infrastructure layer is compounding. That gap is what we suspect sophisticated buyers are beginning to price into diligence.

"Clean, enriched CRM data is now table stakes across the portfolio, you can't run a modern GTM organization without it," said Jack Claydon, AI Value Creation at Hg. "What's exciting is what comes next: orchestration workflows that automate key commercial processes, driving efficiency gains whilst simultaneously improving sales performance. That's where the real value creation is happening."

The metrics that surface it aren't new, but their interpretation has changed. Historically, a declining win rate or rising CAC was a signal of execution problems: the wrong messaging, the wrong reps, or the wrong segments. Today those same signals increasingly indicate a structural deficit including the absence of feedback infrastructure that would have caught and corrected those problems automatically. The question a buyer is now asking isn't just "why are these numbers bad?" It's "does this company have the infrastructure to get better, or will we have to build it from scratch?"

In addition, the compounding doesn't just operate within a single company, but across a portfolio. Firms that build AI-enabled GTM infrastructure consistently across multiple portcos gain the ability to identify what "good" looks like in a given segment by observing what works at scale, and then replicate it systematically. Which outbound signals most reliably predict sub-90-day sales cycles in vertical SaaS? Which expansion triggers drive the highest NRR in the $5–20M ARR range? What GTM tactics work the best with the ever changing market landscape? These are questions you can only answer with data across many companies.

"Most PE firms talk about learning from their portfolio, we are actually doing it. Similar GTM infrastructure across nearly 60 companies means patterns surface fast. Which playbooks drive pipeline in vertical SaaS, which use cases transfer directly, which ones need adapting. The rebuild-from-scratch tax disappears. That's where the compounding starts," said Chris Ross, Head of Portfolio Growth at Hg.

This is where PE firms have a structural advantage that individual companies don't. When the moat is speed, the ability to identify a winning tactic at one portco and deploy it across ten others compounds the benefit in a way no single company can replicate alone.

Ten years ago, ERP infrastructure became a diligence discount. Companies with fragmented back-office systems saw valuations marked down as buyers priced in the remediation cost. We believe GTM infrastructure will be following the same pattern. What's different this time is that the penalty isn't just the remediation cost: it's the compounding value the company has already failed to accumulate. Think the campaigns not run, the signals not captured, and the feedback loops not closed. That's harder to price and recover. Companies with defensible GTM infrastructure are being underwritten with conviction; companies without it are facing a new kind of scrutiny that goes well beyond what shows up in a standard QoE.

"We've seen this process play out before with ERP," said Steven Wastie, GTM Operating Partner at H.I.G. Capital. "The companies that dragged their feet lost years of operating leverage they couldn't get back. GTM infrastructure will be following the same script as AI redefines workflows, systems and organizations."

Where the Space Is Going

There's a deeper shift happening beneath the tactical question of which GTM workflows to automate, and it's worth naming directly when making infrastructure decisions for a portfolio company. The previous generation of GTM software was built primarily as a system of record. The CRM captured what happened, the MAP tracked engagement and the intent tool logged signals. Data flowed in, got cleaned, got organized, and sat there waiting for a human to act on it. The system's job was to remember.

What's emerging now is something categorically different: GTM infrastructure as a system of action and context. The gap between signal and response collapses. A company posts a new job description for a RevOps hire, triggers a workflow, enriches a contact record, scores the account against ICP, drafts a personalized outreach sequence, and routes it to the right rep all before a human has opened their laptop. The system interprets, decides, and acts.

That shift changes the infrastructure decision significantly. In a system-of-record world, the key questions were about data quality and CRM hygiene. In a system-of-action world, the key question is whether your infrastructure is built to orchestrate and whether it can connect signals to actions across tools, contexts, and workflows without requiring a human to mediate every step.

It also changes what "competitive moat" means in a commercial context. A lot of GTM over the past decade was built, implicitly, on friction and information asymmetry, that is on knowing things about prospects that competitors didn't or on buyer inertia making it easier to stay than to switch. AI is systematically dissolving both. Information that used to require six browser tabs and a research contractor is now a single prompt. Switching costs that relied on buyer laziness are being optimized away as agents evaluate alternatives on pure fit and price. The GTM approaches that hold their value in this environment aren't capitalizing on friction anymore. They're built around speed, context, and the ability to act on better signals faster than anyone else. That's the thread connecting the Three Laws of GTM to the infrastructure argument: the sustainable advantage was never the information itself, it was always the ability to find new advantages faster than competitors could copy the last ones.

One question PE operators are increasingly fielding from portco leadership: we're already getting pitched by OpenAI and Anthropic directly, do we need anything else? The answer matters for how you think about the infrastructure stack.

The model layer and the orchestration layer solve different problems. OpenAI and Anthropic provide the reasoning capability, e.g. the intelligence that can interpret a signal, draft a message, or summarize a call transcript. What they don't provide is the surrounding structure that makes that intelligence usable in a real business: the data pipelines that feed clean, enriched inputs into the model, the guardrails that keep outputs within defined parameters, the workflow logic that triggers the right action at the right time, and the handoff layer that writes results back into Salesforce, the data warehouse, or whatever system of record the portco runs on.

The orchestration layer matters especially for PE value creation teams, because what they need isn't open-ended AI experimentation. They need low-risk, repeatable playbooks they can deploy across a portfolio and defend in a board meeting. That means packaging proven GTM use cases, reducing the in-house build burden, and connecting model intelligence to the commercial systems portcos already use.

It also matters for the parts of the portfolio that don't look like SaaS. As more PE firms expand into healthcare, business services, and industrials, the GTM infrastructure problem becomes more difficult. Data is more fragmented, workflows are more complex and the sales motion often involves longer cycles, more stakeholders, and less digital exhaust to work from. These are exactly the conditions where the data and orchestration layer adds the most value because clean, well-formatted data does not exist yet and someone has to find the data and impose structure on fragmented data before a model can do anything useful with it. A healthcare services company with patient records in one system, referral relationships in another, and billing data in a third isn't going to get value from an AI by itself. It needs an enrichment and workflow layer that can unify those inputs and connect them to the commercial motion. That's a solvable problem, and the firms building this infrastructure in non-SaaS verticals now are accumulating a compounding advantage over competitors who are still waiting for their data to get clean on its own.

The firms that understand this distinction (infrastructure vs. tooling; system of action vs. system of record) are the ones building durable commercial advantages in their portfolios. Within 18–24 months, the practical expectation is that autonomous agents will be handling significant portions of account management, outbound sequencing, and expansion intelligence, while humans focus on relationship building and high judgment tasks. The companies that will be positioned to deploy those agents well are the ones building the data foundation and adopting the right infrastructure now.

Score Your Portfolio

The diligence table tells you where the problems are. This rubric gives you a starting point for how far each portco has to go. Score each dimension on a 1–3 scale. It typically takes about 15 minutes per company and surfaces the intervention priorities quickly.

Weighting note: if Data Foundation scores a 1, start there regardless of what the other dimensions show. Nothing downstream works reliably without clean, enriched, unified data. It's the dependency everything else sits on, and it's also where the ROI on fixing it tends to show up fastest.

Scores of 7–10 indicate immediate action is needed. The foundation work has to come first: clean, deduplicate, enrich, and establish a single source of truth for TAM definition.

Scores of 11–16 mean the foundation exists and needs acceleration; the focus shifts to deploying AI workflows across core revenue motions.

Companies approaching 17–21 are ready to work on feedback loops and compounding advantages. That's the flywheel where more usage generates richer data generates better performance.

Frequently Asked Questions

What does AI-enabled GTM actually mean for a PE portfolio company?

AI-enabled GTM means connecting data, enrichment, signals, and automated workflows so that commercial teams can act on better information faster, without proportionally scaling headcount. For portcos, it typically starts with data quality and account research, then expands to automated targeting, personalized outreach, and signal-triggered workflows across the revenue motion.

Which AI GTM use cases are production-ready today?

Account research and enrichment, company scoring with transparent logic, pre-meeting preparation, inbound qualification and routing, and intent signal tracking are all production-ready. Rep-in-the-loop workflows for personalized messaging also deliver reliable results when humans review AI drafts before anything reaches a prospect. Fully autonomous outbound SDRs are not yet production-ready and consistently underperform in practice.

How do PE firms adapt their GTM models as AI offerings change?

The firms generating the best results start narrow, deploying high-accuracy use cases first and expanding from there. They invest in change management alongside new infrastructure, re-skilling GTM leadership to think differently about their work in an AI-native environment. They also treat GTM vendor decisions as compounding infrastructure choices, not one-off procurement calls, because the infrastructure layer determines how fast the commercial function learns and adapts over the next several years.

Why isn't it enough to just use OpenAI or Anthropic directly?

Foundation models provide reasoning capability but not the surrounding structure a real business needs: clean data pipelines, workflow logic, guardrails, and handoff layers that write results back into Salesforce or a data warehouse. PE value creation teams specifically need low-risk, repeatable playbooks they can deploy across a portfolio, and that requires an orchestration layer on top of the model layer.

How does AI-enabled GTM infrastructure affect diligence and valuation?

Sophisticated buyers are beginning to price GTM infrastructure into diligence the same way ERP infrastructure was priced in a decade ago. Companies without clean data and orchestration infrastructure face scrutiny that goes beyond standard QoE metrics. The penalty isn't just remediation cost; it's the compounding value the company has already failed to accumulate through campaigns not run, signals not captured, and feedback loops not closed.

Acknowledgments

This paper was shaped by the generous input of our partners and leading PE practitioners. Thank you to Erik Kristjanson (Vista Equity Partners), Steven Wastie (H.I.G. Capital), Jack Claydon (Hg), and Chris Ross (Hg) for their candid feedback, challenge, and perspective throughout the drafting process.

Also, a huge amount of thanks is due to the Clay team who put this together including Sonia Hernandez, Mary Hanley, Lindsay Workman, Karan Parekh, Sameer Ziaee, Ziqi Deng, and Ted Brown.

.png)

.png)

.png)

.avif)

.avif)

.webp)

.avif)